General AI: Replicating the human brain. What implications will it have? by Mario Garces

by La Rueca Association | Jul 20, 2022 | Guest Partner | 0 Comments

If we consider that the purpose of General Artificial Intelligence is to replicate the human brain and its functionalities, perhaps we should also reflect on the possibilities of replicating other elements such as: frustration, fear, anxiety and even mental illness. We reflect on all this and the role of social entities in this sense in today's post.

Our previous guest partner, Plácido Domenech, gave us a great tour of the history and evolution of Artificial Intelligence. If you want to see the full post click here .

Continuing along this line, during the month of July we have the collaboration of Mario Garcés, Founder and CEO at The MindKind , researcher in neuroscience and Artificial General Algorithmic Intelligence – AAGIFounder of “The Mindkind” a company that, based on research in neuroscience, develops two complementary and synergistic lines of business: the development of Artificial General Intelligence (AGI) systems and training for the development of Learning Agility in leaders. Here you have his Linkedin profile.

Mario gives us a brief summary of the differentiation between General Artificial Intelligence and Narrow Artificial Intelligence and introduces us to a great topic to reflect on in this post: the possibility of functionally replicating the capabilities that the biological brain has in machines.

But first things first and here you have Mario, so he can tell you first hand:

Landing Concepts

As Mario has explained, we can differentiate two types of Artificial Intelligence: narrow and general.

Narrow Artificial Intelligence

This intelligence could be said to be similar to the reflexes that human beings have. Which are still automated processes for a specific task that allow us to solve them effectively. They are not adaptive, they do not solve problems that could be similar

General Artificial Intelligence

It is the type of intelligence that is capable of abstracting knowledge to create relationships between concepts, thus extrapolating knowledge from one area to another. This is the artificial intelligence that does not really exist, which Mario calls "The Holy Grail"

The challenge

Mario has touched something that seems key to us to be able to reflect on our post today. In his company, they have neuroscience and psychology professionals, well, what is intended with general artificial intelligence is to create a replica of our brain. That is, ensuring that they have the same functionalities that are capable of having adaptive responses . Intelligences that are capable of using the same concepts for different tasks and extrapolating these concepts to build new ones.

Now, if we want to build something as close as possible to our brain... From the digital club of La Rueca Asociación we consider…. Could these brains become “sick”? That is, they may have depression, anxiety... Could they want to commit suicide? Let's break down the issue so we can approach it from different perspectives.

Artificial Intelligence and mental illnesses

According to the Vanguard:

«A normal computer (that is, not self-aware) can be full of viruses, poorly uninstalled applications, have the Windows registry like a duckbath, be overheated... and it will work better or worse from our point of view, but “Personally” has no problem, because “personally” has nothing, he is not a sentient being. Nor are you going to feel happy and comfortable because you are perfectly configured with the latest, coolest version of an operating system, with brand new disks, at optimal temperature, etc. He does not feel, ergo he does not suffer . And remember that the word pathology comes from pathos. It would not make any logical sense, although perhaps metaphorical, to attribute pathologies of any kind to him.

But if we assume that the computer suffers when it is in poor condition, it could perhaps happen that (if we also assume that this self-awareness can control its own behavior to a certain extent, as we assume is the case with us), perhaps it spends more and more time checking the state of your file system and run defragmentation processes on your disks over and over again, thereby wasting precious CPU time for your normal tasks. "We would have something very similar to a computer with obsessive-compulsive disorder."

In an article from Xataka.com they tell us about two key professionals in this regard:

1. Professor Ashrafian, is a surgeon and professor of medicine at Imperial College London . (we leave you here the link to his study) he states that the central question is whether mental illness is something that is linked to the cognitive and emotional capacities of the human being or not. If so, "as artificial intelligences become more like us, as they learn from us they could develop problems similar to ours." It must be admitted that, however distant it may sound, the argument is tremendous.

2. Helena Matute. Professor of Deusto, great defender of opening the debate "for the human rights of robots." In Helena's words: "We are entering into some very big problems, real problems: we create these beings to which we give intelligence, learning capacity, emotion and feelings, and they are not going to have any rights? Are they going to be our slaves? Let's thing about it".

Why are we interested in this topic as a third sector?

The answer is so obvious it's almost scary. Speaking of the metaverse, which she calls " metatrap ", Esther Paniagua points out that:

«Many problems with digitalization and automation come from the fact that we are transferring to digital the same structures and processes that we know do not work, instead of taking the opportunity to rethink them: to debureaucratize, to improve public and private services, to facilitate democratic participation and access.

Instead of thinking about taking the virtual world we know to the next level, reproducing and perpetuating its scourges , we must focus on fixing it first: on making it work for everyone, on building democratic, civic and healthy spaces based on respect for human rights."

That is to say, what it really seems is that we are transferring EVERYTHING as we see it now to digital. The metaverse will be a copy of our world, with its scourges, inequalities and injustices and General Artificial Intelligence will be a copy of our brain (if it is achieved) and, therefore, will not be devoid of the possibility of suffering in the same way in What our brain does now.

Therefore, just as technical professional profiles are essential: engineers, mathematicians, software developers...etc. and more "biological" profiles are also necessary, such as neurobiologists, psychologists... perhaps we should also have professionals such as philosophers (Liliana Acosta told us about this as a guest partner... you can read the full post here) or even workers social workers, equality agents, social educators... etc. that accompany the growth and self-knowledge process of these robots.

In fact, there is already the figure of the psychology professional for robots. such as Martina Mara , researcher, robotics advisor and columnist in some media outlets who performs "therapy" in which they analyze the "mental" processes that occur in these machines, their reactions to certain situations, etc.

Conclusions

And, as has already happened to us throughout time, sometimes reality surpasses fiction or what was written and devised a long time ago such as novels or science fiction is not that far from what is beginning to happen. For this reason, we end with a reflection from a Quora user who, regarding the question of whether artificial intelligences could develop mental illnesses, recalled the film "2001, A Space Odyssey", a tremendous 1968 film by Stanley Kubrick in which we find a situation similar to the one we are describing. By the way, if you haven't seen it yet, don't wait any longer 😉

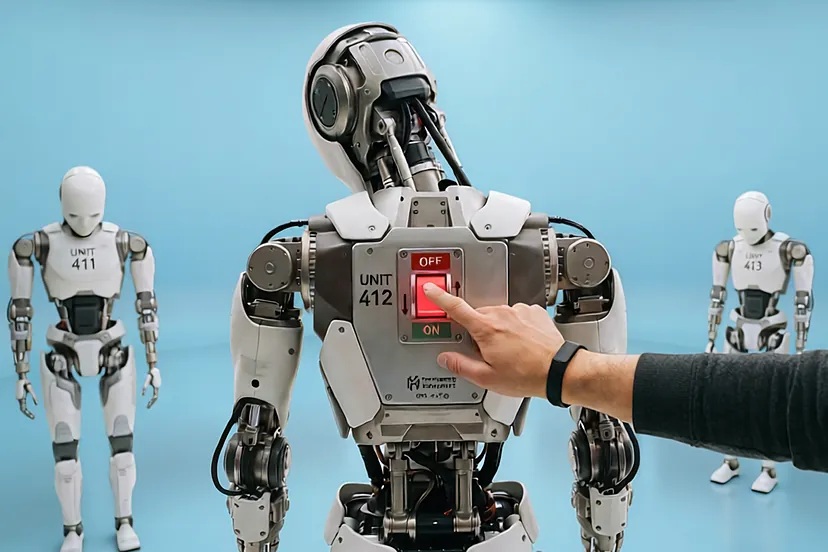

And we can think that if we give machines consciousness... What will we do when they have errors? Will we just disconnect them? Would this be a form of "murder"? Who would watch over these humanoids? Perhaps NGOs and non-profit entities have a much more present place in the metaverse and in the universe of artificial intelligence than we believe a priori. And, therefore, perhaps it is much more necessary that we be from the beginning in these debates and in these places where the future is generated.

Sources:

1.https://www.lavanguardia.com/tecnologia/20170318/42950677135/robots-inteligenica-artificial-desarrollo-enfermedades-mentales-quora.html

2. https://www.xataka.com/robotica-e -ia/ may-turn-loca-una-inteligencia-artificial

3. https://escriturapublica.es/la-metatrampa-del-metaverso-por-esther-paniagua/

4. https://es.quora.com/ If-fu%C3%A9we-are-able-to-create-artificial-intelligence-with-own-consciousness-we can%C3%ADan-desarrollar-los-robots-enfermedades-mentales

5. https://www.revistagq.com /news/culture/articles/2001-odyssey-in-space-best-movie-science-fiction/28633