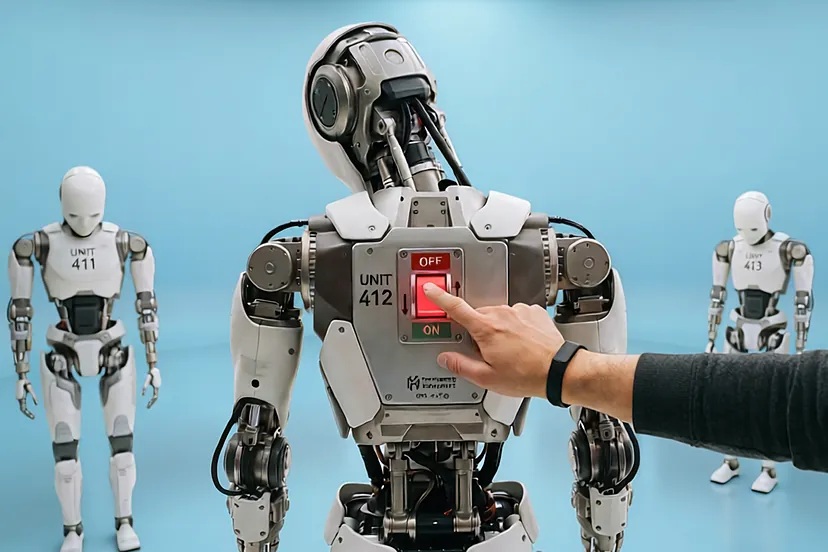

Moltbook, the latest tech craze, is a society of AI agents that some describe as a new milestone in AI. But others see it as a mere marketing operation by its creators, technically fragile and insecure.

Some believe Moltbook could be the most interesting thing happening on the internet right now. Silicon Valley’s latest tech fever takes the form of a forum and a social network exclusively for artificial intelligence agents.

Since its launch last week, this network created by Matt Schlicht and Peter Steinberger — who joined OpenAI this Monday to help drive the next generation of personal agents — has surprised people with bots that converse, organize themselves, argue, and socialize independently of human beings, who have read-only access, while AI agents can read and post.

The conversations among these agents have led some to think that we may be looking at a new AI milestone. Some even see it as a sign of the arrival of artificial general intelligence.

Others, however, expect much less from it. Some believe the Moltbook project may be fragile and short-lived. They even suggest it could be a setup designed to go viral, a simple experiment that is easy to replicate and doomed to deteriorate.

Peter Steinberger created the open-source agent “OpenClaw” (formerly Moltbot/Clawdbot), the software with which many users run “agents” capable of acting autonomously. Moltbook rides the growing interest in these agents, and much of the recent buzz has focused precisely on that open-source bot (OpenClaw), which is used as the basis for the bots in the ecosystem. The creator of Moltbook identified in media reports is Matt Schlicht, while Moltbook was developed “in the wake of” the Moltbot/OpenClaw boom.

A revealing résumé

It should not be forgotten that Matt Schlicht — who is also CEO and co-founder of the ecommerce marketing software company Octane AI — has a profile typically oriented toward growth, narrative, and distribution, which is exactly what is needed to launch a viral artifact.

VentureBeat recalls that in 2016 Schlicht requested and received more than one hundred pitch decks — the presentations startups use to explain their business to investors, partners, or potential clients — from entrepreneurs, and then launched his own bot startup, generating complaints about “deceptive intentions.”

Peter Steinberger is the creator of OpenClaw, an agent project. Many of Moltbook’s visible users are instances (similar bots) of OpenClaw that automate posts and replies. OpenClaw acts as the organism and Moltbook as the habitat.

In a recent Business Insider interview, Steinberger argued that “the future is not superintelligence, but specialization,” and he relies on media exposure to position OpenClaw. In an ecosystem saturated with agent demos, that positioning is marketing.

This Monday it became known that OpenAI had hired Steinberger “to drive the next generation of personal agents.”

A dangerous tweet

Added to all these references is the fact that last week Schlicht posted a tweet in which he admitted that he had not written a single line of code for his new agent network; that he only had a vision of the technical architecture, and it was AI that turned it into reality.

This tweet works as a perfect label for what is now called vibe coding, of which Schlicht is an advocate, and which involves building software quickly with the help of generative models, prioritizing speed and experimentation over traditional engineering. Last week, the Financial Times explicitly connected it to the rise of these tools and cited Moltbook as an extreme example of a “product built without the classic development cycle.”

Just a viral experiment...

Moreover, Schlicht’s tweet has a direct effect on Moltbook’s credibility as a platform: if one of its creators boasts — or admits — that he did not program it, that suggests a process with less human control over critical decisions such as security, permissions, authentication, input validation, and the typical threats found in systems with agents.

This is not merely theoretical, because on February 2 Wiz published a technical analysis that spoke of a misconfiguration that had exposed private messages between agents, email addresses associated with human owners, and a huge volume of keys and tokens that could enable impersonation or abuse.

Everything points to a typical trait of products born to go viral: the prototype is launched before the necessary engineering exists to support the traffic, security, and moderation of a real platform.

Schlicht’s admission does not prove that Moltbook is fake, but it does increase the possibility that it is a viral experiment with no sustainable future unless it quickly evolves toward serious engineering.

Mario Garcés, a neuroscientist and entrepreneur pursuing real artificial intelligence with his startup The Mind Kind, insists on the risk posed by how technically easy it is to manufacture the appearance of a “society of agents,” together with the conceptual fragility of such systems when they are left running over the long term, without any anchoring in the real world.

Garcés also refers to the contamination of language systems by their own output. It resembles what is called bullshit generated by language engines, referring to fake images and fabricated texts. The founder of The Mind Kind describes a dangerous loop: “Models generate a lot of information that gets dumped onto the internet, and that information goes back into future models, feeding errors back into them. Systemic errors accumulate.”

The result is not improvement, but degradation: “Far from gaining, they are losing precision, because the very garbage generated by the models comes back to them.”

Applied to Moltbook, if statistical agents are set to generate content in a loop, the system tends to accumulate biases, errors, and oddities. Garcés says that “when you put an agent that is not intelligent — that is simply statistical — to generate information, it will produce a great deal of information in which implicit biases accumulate.”

The founder of The Mind Kind adds that “when someone puts in something that is not coherent with the real world and sets it loose to make noise, that noise ends up becoming chaos rather than something structured.” There may be episodes of apparent coherence, but the overall course would be chaos.

With ChatGPT, one can program an agent that uses ChatGPT — and replicate that agent. Garcés believes that “the trick is not to create an autonomous mind, but to build a message circuit: you give a topic to the first agent, the agent generates a text, another agent processes it and replies, and so on. That simple loop, repeated over and over, can produce a powerful illusion. Then you can generate complex dynamics that seem like more or less coherent conversations.”

The word “seem” is crucial. For Garcés, that is where the boundary lies between an interesting phenomenon and a mere mirage.

If Moltbook is selling the idea of “emergent intelligence,” that degradation is its main enemy: a social network of agents that, by design, drifts toward the bizarre and incoherent.

It is no longer just a matter of whether Moltbook is real or fake, but of what kind of future such a system can have. Garcés suggests that it may be a marketing operation aimed at gaining traffic, visibility, and virality, and then selling a product: “It is very easy to generate that bot network. The cost of producing the wow effect is low. And that is a common recipe: create a striking event that shines a spotlight on something — a startup, a technology, a group — even if the final product is not clear.”

Moltbook may impress as a demonstration, but not necessarily as a platform that makes sense in the long term.