The end of artificial intelligence wouldn't mean the end of civilization, but it would represent a colossal loss of efficiency. In a slower, less efficient, and error-prone world, the challenge wouldn't just be adopting the technology, but ensuring business continuity when automated systems suddenly fail.

It's been 24 hours since artificial intelligence shut down. There's a lot of surprise and uncertainty, but no one would say it's the end of civilization, although a sharp drop in productivity is already being felt worldwide . After the first 24 hours of an AI blackout, the most visible effect wouldn't be particularly spectacular, but rather prosaic: many would abruptly return to manual labor in tasks that were already partially automated. There wouldn't be a collapse, but there would be a rapid decline in our growing effectiveness, gained through the use of these revolutionary tools.

Mario Garcés, a neuroscientist and founder of The Mind Kind, which works to achieve artificial general intelligence, believes that this blackout, which would reduce productivity in the first moments, "would not be dramatic, although it would have to be taken into account in relation to GDP and certain collateral impacts that could occur in capital markets, or in governments that try to save certain industries."

Different levels

It's unlikely that all AI will disappear at once, but certain specific services could fail , and Garcés believes that "this could happen if maintaining them costs more than they actually provide. Then, some companies might stop supporting them. And if AI remains socially useful even if it's unprofitable, governments could end up funding its infrastructure."

In short, an artificial intelligence blackout would affect customer service, document analysis, fraud detection, clinical support, education, and some automated transport services within the first 24 hours .

Furthermore, it's important to consider that in the current phase of AI agents, an AI outage is more painful than before, because it no longer just interrupts responses: it disrupts delegated work. The agent doesn't just assist; it executes tasks. If it fails, it leaves processes unfinished, forces a return to manual work, and paralyzes automated workflows. The impact would be even greater for companies that already rely on API-connected agents to perform real work, not just to answer questions .

Possible and improbable...

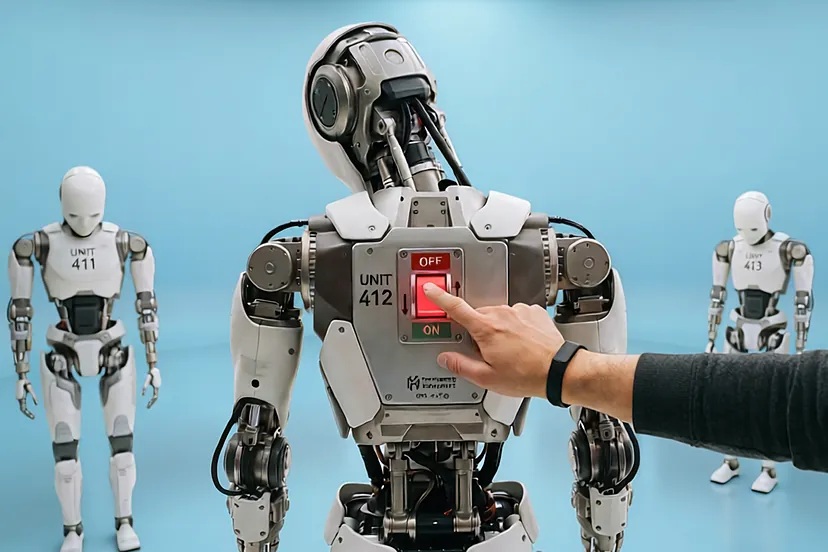

Everything we've seen so far is a hypothetical situation. The reality is that a total and simultaneous global blackout of all AI is highly unlikely, although a partial, sectoral, or temporary blackout is possible. In The AI Index Report 2025 , Stanford University points out that "AI is not a single machine or a single button: it is thousands of models, vendors, chips, data centers, embedded applications, enterprise software, and on-premises systems."

And the latest security report from the UK's AI Security Institute concludes that " it is not reasonable to imagine that all the AI on the planet will shut down at once , but it is reasonable to anticipate partial disruptions, regulatory rollbacks, energy limits or supplier failures that will cause AI to stop working as it does now in certain places, sectors or periods."

The first effects

Thus, in the first 24 hours without artificial intelligence, a partial blackout would not stop the world, but it would slow down the systems that had already delegated to AI the first filter, the first draft, the first classification, or the first priority.

And when that first layer disappears, symptoms appear: more time per task, longer queues, more human review, and more errors of omission or priority.

We would also discover how many functions already rely on AI without the user even noticing. Not only would generative chats stop working, but also internal systems for classification, moderation, scoring , search, prediction, and support. This silent dependence aligns with Stanford University's diagnosis that AI is "increasingly embedded" in everyday life and in sectors like healthcare and transportation.

Seven days after the blackout , the problem would cease to be merely one of convenience and would become one of business operations. After a week, the issue would no longer be just that people were taking longer, but that entire business processes were beginning to break down: worse early detection, queues that never cleared, more repetitive work, and a significantly reduced capacity to respond.

The OECD notes that among the most frequent uses are data analytics and fraud detection in finance, and production and maintenance processes in manufacturing.

If AI were to fail for a week in banking and compliance , banks would still function, but at a worse rate. There would be more false alarms, more manual review, longer delays in fraud investigations, and a greater risk of missing critical cases in time. This translates to reduced efficiency, poorer prioritization, and a larger backlog of work.

Another clear example appears in manufacturing . The OECD points out that predictive maintenance is one of the most mature and impactful AI use cases in industry, "because it allows for the detection of anomalies, the anticipation of failures, and the shift from reactive or schedule-based maintenance to dynamic, data-driven maintenance."

If AI becomes unavailable for a week, the factory doesn't disappear, but it reverts to a more cumbersome system: more manual inspections, more precautionary or late maintenance, worse production planning, and a higher probability of unexpected shutdowns.

In cybersecurity, the logic is similar, but more dangerous. We would need more time to detect incidents, more human triage, and a greater likelihood that a weak signal would be buried among thousands of irrelevant events. That's a very concrete example of "reduced responsiveness."

In public administration and employment services , the damage also becomes structural within a week. The OECD documents that approximately half of the public employment services in member countries already enhance their tools with AI: 17% use chatbots for information, 17% use profiling tools, and 20% use matching systems to recommend vacancies. If these functions fail for even a week, the problem isn't just reduced digital convenience: applications pile up, case classification worsens, the capacity to guide job seekers is diminished, and officials spend more time on basic questions and less on complex cases.

After a month of partial AI shutdown , the problem would no longer be just inconvenience or occasional delays, but a structural loss of performance in many sectors . The economy would continue to function, but with less speed, less capacity, and worse prioritization. The key is that AI is no longer a future promise, but a tool integrated into real-world activities such as healthcare, transportation, banking, customer service, software development, administration, and education.

Medicine itself wouldn't disappear in healthcare , but a layer that currently helps prioritize cases, expedite diagnoses, and reduce waiting times would be lost. This would create more bottlenecks in radiology, emergency services, and manual test review.

The transportation and mobility system wouldn't grind to a halt, but autonomous services and optimization tools would be negatively impacted. There would be less capacity for adjustment, poorer forecasting, and less efficient mobility.

In banking, insurance, and compliance , the impact would be significant because AI already filters enormous volumes of alerts and helps detect fraud. Without it, there would be more manual review, more false positives, more delays, and a greater risk of missing important cases.

In customer service , the service would still exist, but with persistent queues, more escalations to senior staff, longer resolution times, and more uneven quality.

In other sectors such as software development, programming would not be abandoned, but there would be fewer deliveries, more delays, and less capacity to absorb new tasks.

In public administration and employment services, automatic classification, vacancy matching, and initial attention would be lost, leading to more accumulated files and less responsiveness.

And in education , schools and universities would continue to operate, but with less support in tutoring, writing, preparing materials, and administrative tasks.

The damage after a month without AI would be competitive, not marginal . We wouldn't be talking about a civilizational collapse, but rather real systems operating a step below the capacity and efficiency they had become accustomed to.

Readoption

Furthermore, in the event of a power outage, companies would not only have to consider temporarily discontinuing AI, but also readopting it with a different approach. Less focus on "putting AI everywhere" and more on "using AI where it can survive audits, outages, vendor changes, and a temporary return to manual labor."

An OECD report offers a key insight into readoption: If there were a partial AI shutdown, companies couldn't simply wait for it to return. They would have to move from "adopting AI" to ensuring business continuity without it: reopening manual processes, prioritizing tasks, and deciding which uses to bring back first. Not all uses would be equally urgent. Some could wait, others would require human substitutes, and the most critical should only be reintroduced once they demonstrated reliability, control, and oversight. Using artificial intelligence also requires preparing to work temporarily without it.

This readaptation wouldn't just reflect poorly on PwC's US CEO, Paul Griggs, who declared this week that partners who resist the advancement of AI will have no place at the firm... Internal AI champions would also face a tough test. Those who merely generate enthusiasm without preparing alternative plans would lose credibility, while those who design controls and ensure continuity would gain influence. Bonuses for mastering AI would likely decrease, especially for profiles heavily reliant on specific tools. Those who, in addition to using artificial intelligence, know how to manage processes, risks, data, and a temporary return to manual work would continue to be more valuable.

A system failure...

The hypothesis of an AI blackout is often imagined as a complete shutdown, with black screens, out-of-service models, and an entire technology vanishing at once. But the more plausible scenario is closer to a gradual breakdown: AI becomes too critical for governments, businesses, and services before it is robust, useful, and auditable enough, and then it fails precisely where it was considered guaranteed to work. Mario Garcés, founder of The Mind Kind, cites the clash between Anthropic, the startup founded by Dario Amodei, and Donald Trump, and the economic fragility of the gigantic physical infrastructure that sustains the new AI boom.

- Regarding the conflict between Trump and Anthropic, the National Institute of Standards and Technology (NIST) defines "trustworthy" AI as that which is valid, transparent, accountable, secure, and resilient. In its specific profile for generative AI, NIST adds that organizations must prepare alternative processes, deactivation mechanisms, and communication plans for disabling or retiring these systems. AI can degrade, behave inconsistently, or simply not be trustworthy enough for critical uses. Making it essential infrastructure before addressing these issues is not a philosophical abstraction. It is an operational risk, and this is where the conflict between Anthropic and the Trump administration comes in. The Pentagon recently defended in court its decision to declare Anthropic a "national security supply chain risk" after the company refused to lift restrictions preventing the use of its technology in autonomous weapons and domestic surveillance. Anthropic challenged the decision in court, arguing that the measure was retaliation that could cost it billions of dollars. The company, however, did not oppose collaborating with defense in general: in July 2025, it announced a $200 million agreement with the Department of Defense to develop AI capabilities applied to national security. The clash arose over two specific exceptions: fully autonomous weapons and mass domestic surveillance.

- This makes the case a lesson in what a functional AI "shutdown" would look like. Trump and the Pentagon wanted to treat the technology as a critical capability already available for national security. Anthropic responded that current boundary models are unreliable for fully autonomous weapons and that mass surveillance of Americans violates fundamental rights. A shutdown isn't just about a server going down or an API becoming unresponsive. It can also mean discovering too late that the technology can't be used where political power had already budgeted for it to be mature.

- The other side of the problem is that AI doesn't float in the air; it rests on chips, data centers, networks, and a massive flow of electricity. The International Energy Agency points out that "there is no AI without energy," specifically without electricity for data centers. And the OECD describes AI infrastructure as "a highly complex and often highly concentrated ecosystem." This concentration doesn't make a collapse inevitable, but it does mean that any financial, technical, or regulatory failure will have a multiplied effect.

- Thus, Mario Garcés speaks of the "expiration" of data centers. There isn't a fixed, universal five-year rule for all hardware, but there is an industry reality: these assets depreciate rapidly, and technological acceleration forces a review of their useful life. Amazon reported in 2024 that it was reducing the useful life of some of its servers and network equipment from six to five years, and this review would cut its 2025 operating profit by $1.3 billion. Obsolescence isn't an abstract fear, and it's worth asking what happens if infrastructure grows much faster than the value it actually generates. Therefore, the discussion no longer revolves solely around whether AI will change the world, but whether the numbers add up.

- None of this in itself proves a bubble destined to burst, but it paints a picture of a structure highly sensitive to delays, drops in demand, or lower-than-promised revenues. When Mario Garcés speaks of a potential "blackout" linked to the failure of the companies currently leading the way in AI, he isn't describing the disappearance of the technology. He's referring to less available capacity, higher prices, less competition, greater dependence on a few providers, and consequently, greater vulnerability for all those who have already made AI a critical component. In that scenario, AI would still exist, but the service would be more expensive, scarcer, and more uncertain.

- AI can become critical before it is truly reliable and auditable, as the clash between Anthropic and Trump demonstrates. It can also become strategically indispensable before the economic model that underpins it proves to be long-term stable. If both of these things happen, the AI "shutdown" won't be an apocalyptic event. The big question is no longer whether AI can fail. The question is whether we are making it indispensable too soon.